Kubernetes K8S之Taints污点与Tolerations容忍详解与示例

主机配置规划

| 服务器名称(hostname) | 系统版本 | 配置 | 内网IP | 外网IP(模拟) |

|---|---|---|---|---|

| k8s-master | CentOS7.7 | 2C/4G/20G | 172.16.1.110 | 10.0.0.110 |

| k8s-node01 | CentOS7.7 | 2C/4G/20G | 172.16.1.111 | 10.0.0.111 |

| k8s-node02 | CentOS7.7 | 2C/4G/20G | 172.16.1.112 | 10.0.0.112 |

Taints污点和Tolerations容忍概述

节点和Pod亲和力,是将Pod吸引到一组节点【根据拓扑域】(作为优选或硬性要求)。污点(Taints)则相反,它们允许一个节点排斥一组Pod。

容忍(Tolerations)应用于pod,允许(但不强制要求)pod调度到具有匹配污点的节点上。

污点(Taints)和容忍(Tolerations)共同作用,确保pods不会被调度到不适当的节点。一个或多个污点应用于节点;这标志着该节点不应该接受任何不容忍污点的Pod。

说明:我们在平常使用中发现pod不会调度到k8s的master节点,就是因为master节点存在污点。

Taints污点

Taints污点的组成

使用kubectl taint命令可以给某个Node节点设置污点,Node被设置污点之后就和Pod之间存在一种相斥的关系,可以让Node拒绝Pod的调度执行,甚至将Node上已经存在的Pod驱逐出去。

每个污点的组成如下:

1 | key=value:effect |

每个污点有一个key和value作为污点的标签,effect描述污点的作用。当前taint effect支持如下选项:

- NoSchedule:表示K8S将不会把Pod调度到具有该污点的Node节点上

- PreferNoSchedule:表示K8S将尽量避免把Pod调度到具有该污点的Node节点上

- NoExecute:表示K8S将不会把Pod调度到具有该污点的Node节点上,同时会将Node上已经存在的Pod驱逐出去

污点taint的NoExecute详解

taint 的 effect 值 NoExecute,它会影响已经在节点上运行的 pod:

- 如果 pod 不能容忍 effect 值为 NoExecute 的 taint,那么 pod 将马上被驱逐

- 如果 pod 能够容忍 effect 值为 NoExecute 的 taint,且在 toleration 定义中没有指定 tolerationSeconds,则 pod 会一直在这个节点上运行。

- 如果 pod 能够容忍 effect 值为 NoExecute 的 taint,但是在toleration定义中指定了 tolerationSeconds,则表示 pod 还能在这个节点上继续运行的时间长度。

Taints污点设置

污点(Taints)查看

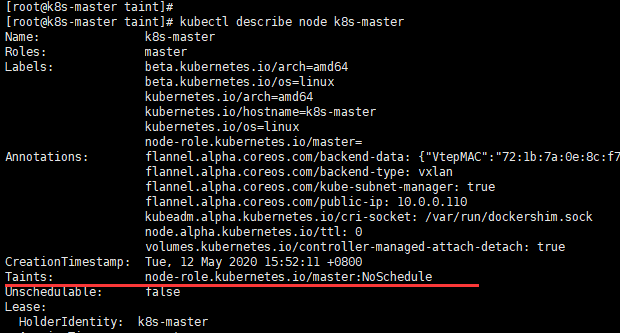

k8s master节点查看

1 | kubectl describe node k8s-master |

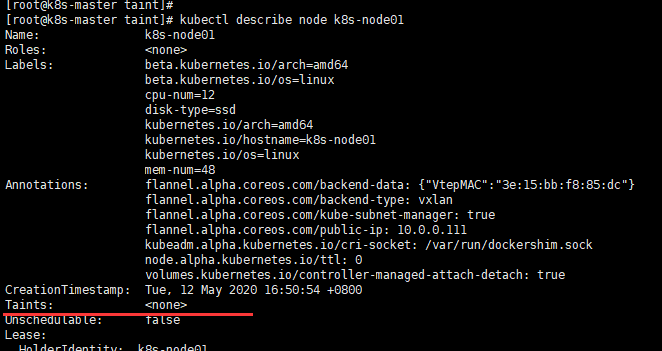

k8s node查看

1 | kubectl describe node k8s-node01 |

污点(Taints)添加

1 | [root@k8s-master taint]# kubectl taint nodes k8s-node01 check=zhang:NoSchedule |

在k8s-node01节点添加了一个污点(taint),污点的key为check,value为zhang,污点effect为NoSchedule。这意味着没有pod可以调度到k8s-node01节点,除非具有相匹配的容忍。

污点(Taints)删除

1 | [root@k8s-master taint]# kubectl taint nodes k8s-node01 check:NoExecute- |

Tolerations容忍

设置了污点的Node将根据taint的effect:NoSchedule、PreferNoSchedule、NoExecute和Pod之间产生互斥的关系,Pod将在一定程度上不会被调度到Node上。

但我们可以在Pod上设置容忍(Tolerations),意思是设置了容忍的Pod将可以容忍污点的存在,可以被调度到存在污点的Node上。

pod.spec.tolerations示例

1 | tolerations: |

重要说明:

- 其中key、value、effect要与Node上设置的taint保持一致

- operator的值为Exists时,将会忽略value;只要有key和effect就行

- tolerationSeconds:表示pod 能够容忍 effect 值为 NoExecute 的 taint;当指定了 tolerationSeconds【容忍时间】,则表示 pod 还能在这个节点上继续运行的时间长度。

当不指定key值时

当不指定key值和effect值时,且operator为Exists,表示容忍所有的污点【能匹配污点所有的keys,values和effects】

1 | tolerations: |

当不指定effect值时

当不指定effect值时,则能匹配污点key对应的所有effects情况

1 | tolerations: |

当有多个Master存在时

当有多个Master存在时,为了防止资源浪费,可以进行如下设置:

1 | kubectl taint nodes Node-name node-role.kubernetes.io/master=:PreferNoSchedule |

多个Taints污点和多个Tolerations容忍怎么判断

可以在同一个node节点上设置多个污点(Taints),在同一个pod上设置多个容忍(Tolerations)。Kubernetes处理多个污点和容忍的方式就像一个过滤器:从节点的所有污点开始,然后忽略可以被Pod容忍匹配的污点;保留其余不可忽略的污点,污点的effect对Pod具有显示效果:特别是:

- 如果有至少一个不可忽略污点,effect为NoSchedule,那么Kubernetes将不调度Pod到该节点

- 如果没有effect为NoSchedule的不可忽视污点,但有至少一个不可忽视污点,effect为PreferNoSchedule,那么Kubernetes将尽量不调度Pod到该节点

- 如果有至少一个不可忽视污点,effect为NoExecute,那么Pod将被从该节点驱逐(如果Pod已经在该节点运行),并且不会被调度到该节点(如果Pod还未在该节点运行)

污点和容忍示例

Node污点为NoExecute的示例

记得把已有的污点清除,以免影响测验。

实现如下污点

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

污点添加操作如下:

「无,本次无污点操作」

污点查看操作如下:

1 | kubectl describe node k8s-master | grep 'Taints' -A 5 |

除了k8s-master默认的污点,在k8s-node01、k8s-node02无污点。

yaml文件

1 | [root@k8s-master taint]# pwd |

运行yaml文件

1 | [root@k8s-master taint]# kubectl apply -f noexecute_tolerations.yaml |

由上可见,pod是在k8s-node01、k8s-node02平均分布的。

1 | kubectl taint nodes k8s-node02 check-mem=memdb:NoExecute |

此时所有节点污点为

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

之后再次查看pod信息

1 | [root@k8s-master taint]# kubectl get pod -o wide |

由上可见,在k8s-node02节点上的pod已被驱逐,驱逐的pod被调度到了k8s-node01节点。

Pod没有容忍时(Tolerations)

记得把已有的污点清除,以免影响测验。

实现如下污点

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

污点添加操作如下:

1 | kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule |

污点查看操作如下:

1 | kubectl describe node k8s-master | grep 'Taints' -A 5 |

yaml文件

1 | [root@k8s-master taint]# pwd |

运行yaml文件

1 | [root@k8s-master taint]# kubectl apply -f no_tolerations.yaml |

由上可见,因为k8s-node02节点的污点check-nginx 的effect为NoSchedule,说明pod不能被调度到该节点。此时k8s-node01节点的污点check-nginx 的effect为PreferNoSchedule【尽量不调度到该节点】;但只有该节点满足调度条件,因此都调度到了k8s-node01节点。

Pod单个容忍时(Tolerations)

记得把已有的污点清除,以免影响测验。

实现如下污点

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

污点添加操作如下:

1 | kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule |

污点查看操作如下:

1 | kubectl describe node k8s-master | grep 'Taints' -A 5 |

yaml文件

1 | [root@k8s-master taint]# pwd |

运行yaml文件

1 | [root@k8s-master taint]# kubectl apply -f one_tolerations.yaml |

由上可见,此时pod会尽量【优先】调度到k8s-node02节点,尽量不调度到k8s-node01节点。如果我们只有一个pod,那么会一直调度到k8s-node02节点。

Pod多个容忍时(Tolerations)

记得把已有的污点清除,以免影响测验。

实现如下污点

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

污点添加操作如下:

1 | kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule |

污点查看操作如下:

1 | kubectl describe node k8s-master | grep 'Taints' -A 5 |

yaml文件

1 | [root@k8s-master taint]# pwd |

运行yaml文件

1 | [root@k8s-master taint]# kubectl apply -f multi_tolerations.yaml |

由上可见,示例中的pod容忍为:check-nginx=web:NoSchedule;check-redis=:NoSchedule。因此pod会尽量调度到k8s-node02节点,尽量不调度到k8s-node01节点。

Pod容忍指定污点key的所有effects情况

记得把已有的污点清除,以免影响测验。

实现如下污点

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

污点添加操作如下:

1 | kubectl taint nodes k8s-node01 check-redis=memdb:NoSchedule |

污点查看操作如下:

1 | kubectl describe node k8s-master | grep 'Taints' -A 5 |

yaml文件

1 | [root@k8s-master taint]# pwd |

运行yaml文件

1 | [root@k8s-master taint]# kubectl apply -f key_tolerations.yaml |

由上可见,示例中的pod容忍为:check-nginx=:;仅需匹配node污点的key即可,污点的value和effect不需要关心。因此可以匹配k8s-node01、k8s-node02节点。

Pod容忍所有污点

记得把已有的污点清除,以免影响测验。

实现如下污点

1 | k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】 |

污点添加操作如下:

1 | kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule |

污点查看操作如下:

1 | kubectl describe node k8s-master | grep 'Taints' -A 5 |

yaml文件

1 | [root@k8s-master taint]# pwd |

运行yaml文件

1 | [root@k8s-master taint]# kubectl apply -f all_tolerations.yaml |

后上可见,示例中的pod容忍所有的污点,因此pod可被调度到所有k8s节点。

相关阅读

1、官网:污点与容忍

2、Kubernetes K8S调度器kube-scheduler详解

3、Kubernetes K8S之affinity亲和性与反亲和性详解与示例

完毕!